I've been watching Apple keynotes with a keen interest for many, many years and I usually make a number of predictions; some correct, some completely off the mark. However, this is the first time I've decided to publish my thoughts prior to a keynote. I don't claim that all of these things will come true -- some of them are just on my wishlist -- but I will come back to this list tomorrow to see how accurate I was.

Update: My follow up article is now available with details of the WWDC Keynote and how my predictions panned out.

The most crucial thing about the core Apple products -- Macs, iPhones, iPads, and iPods -- is not the hardware. There are cheaper, faster components for personal computers, there are lighter more powerful smartphones, and I dare say we'll see some tablets soon which make the iPads engorged iPod Touch design feel dated. Whilst they are all aesthetically pleasing, the hardware is actually fairly underwhelming (aside from a few innovations such as the Retina Display). What makes Apple products so desirable is the software and it is for that reason that I think WWDC 2011 is going to be one of the most important conferences in recent years. With major updates for iOS and OS X at the same time, Apple has everything to gain if it gets it right, and a lot to lose if it gets it wrong. Throw into the mix a pre-announcement on cloud technology, and we could yet be surprised by what was generally perceived to be a keynote which would lack anything completely unexpected. Apple have released a press announcement and plastered the Moscone Center in posters showing that WWDC 2011 = iOS 5, Mac OS X Lion, and iCloud. For that reason, I'll take a look at each one in turn to give my predictions on what we might see announced tomorrow.

iOS 5.0

Definitely the highlight for me (as an iOS developer), I firmly believe that iOS 5 is going to be the biggest update to iOS since iOS 2.0 added the App Store. Why? Up until this point, iOS has been a leader in both innovation and in user experience. However, the home screens are now feeling dated and Android, Windows Phone 7, and WebOS are now creeping up in terms of features and UI (especially in the area surrounding notifications, widgets, and tiles). So, what will Apple add to iOS 5?

Notifications

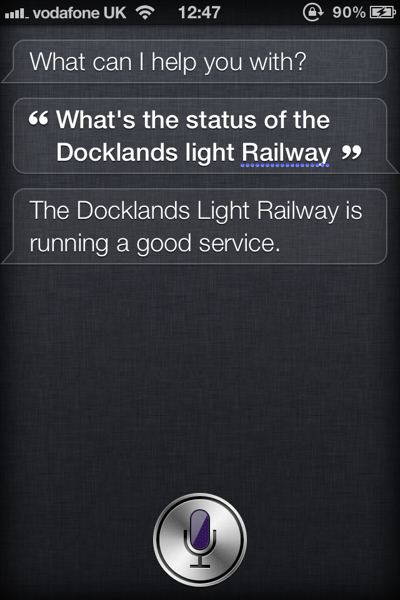

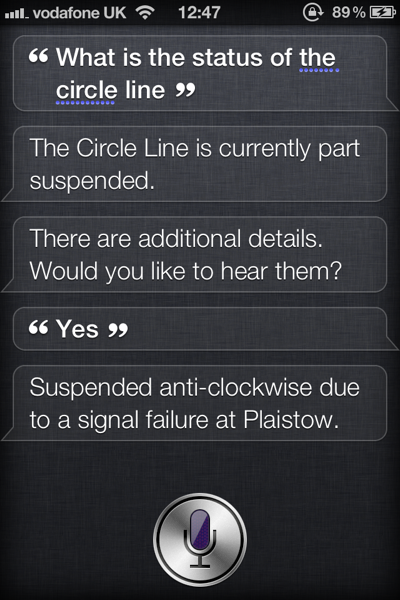

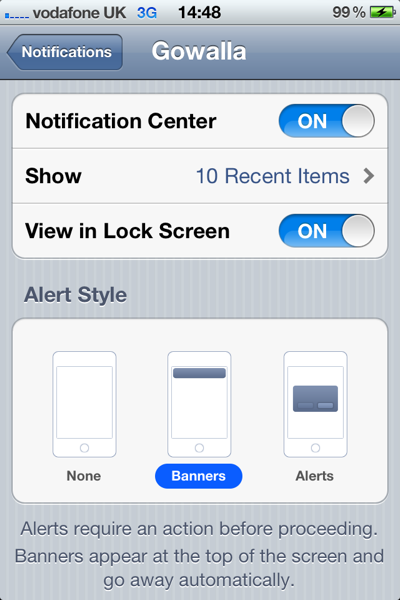

The notifications system in the current versions of iOS is pretty shameful when stacked up against the competition. Alerts come in one at a time and overwrite previous alerts (for example, if my app sends 2 pushes, you'll only see the second one. If another app sends a push, you won't even see mine just theirs). The advent of local notifications, push notifications, and badge icons worked well as a stopgap for true multitasking, but the horrible modal UI just doesn't feel right on a phone anymore when consumers are getting more used to seeing unobtrusive notifications. When you start getting issues such as a push notification overlaying the phone panel (so you have to close a push before you can hang up on someone), the system is obviously broken. I expect this will be one of the major tentpoles of iOS 5 and we'll see a notifications system more similar to a cross between Android and Growl. On Android, you can pull down the status bar to see a list of all notifications and every app that has an unread notification is visible in the status bar as a small icon. There are notifications for new emails, tethering, available wi-fi hotspots, and 3rd party apps (e.g. new tweets). However, they are totally unobtrusive.

I don't think this will be copied verbatim as the UI convention doesn't work (how many users know to pull down the status bar?). What I think more likely is that the search dialogue (swipe to the right from the first home screen) will be replaced with search and notifications. At the moment, the keyboard slides up automatically to take up half the screen and the search box takes up about 50px at the top. If the keyboard didn't come up, there would be a large amount of space to list notifications in a similar way to search results (e.g. by app with a badge or other count to show notification titles) with one-touch access to the application in question.

But how do you know when you have notifications? I would guess that there will be an Android-similar status bar icon but that there will also be Growl-style notifications in-app. These are already used for Game Center (ever noticed when you start up a Game Center enabled app that you'll get a dialogue slide in from the top saying "Welcome back, username"?) so it's not a stretch to expect that they would be available, and tappable, for 3rd party notifications.

Home Screens / Widgets

The home screens have been the same on iOS since version 1.0 and are now feeling slightly dated, especially when you consider the number of apps available on the App Store. iOS 4 saw the introduction of folders and 11 screens worth of apps (meaning you can install over 2000 apps) but this still seems a way of adding more rather than making it easy to prioritise. I love the folders system, but there are other players now who are doing this better. Ashamedly, Microsoft are one of them with their tiles system which is integral to both Windows Phone 7 and the recently previewed Windows 8. It is most likely that the home screens will stay as they are but a new dashboard system (or perhaps "Mission Control" to keep in with Lion branding... see later) will be added in order to add one or two screens of your most-used apps. This might come in the form of a gesture, an app, or just being the default landing page after you unlock the phone (with another swipe necessary to take you to all apps) but I think it likely we'll see some changes to how apps can be organised.

This leads me nicely into Widgets which are another thing which has been popular for a while, both in OS X in the form of Dashboard, and on Android phones. On Android, some apps can drop widgets onto the home screen so you can have a space (maybe 4 app icons by 2 app icons in size) that shows you your Facebook updates or a wall clock, etc. The problems are that on Android there are no design conventions so they generally look awful - they also take up far too many system resources (e.g. the Facebook app used up 10% of my battery on average due to the way in which it checked for updates). I would expect Apple to solve both of these problems in a graceful way.

One last word on the home screen - I don't expect them to be gotten rid of or for widgets and apps to coincide (as on Android). The reason for this is that OS X Lion has an app overlay modelled on iOS but maintains its dashboard separately (interestingly with a swipe to the right from the apps page as per my suggestion for notifications). Whilst this could be an interesting tactic by Apple to lull us into a false sense of security (e.g. Lion beta has iOS home screens therefore home screens aren't changing but actually they are *dun dun daaaaaaaa*) I doubt it. I expect the home screen to remain as it is but for there to be a new dashboard screen to replace the first home screen (or some other way of accessing a widget laced dashboard).

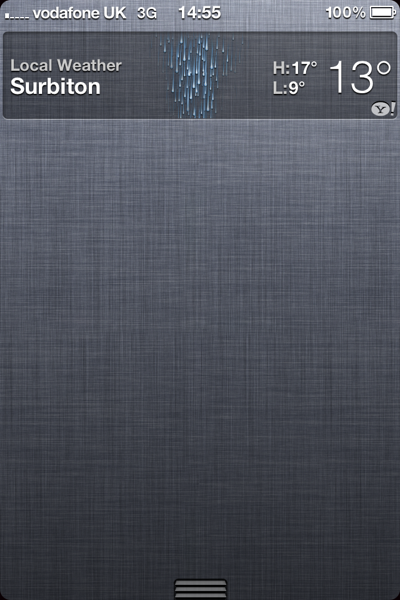

Lock Screen

Heavily connected to both of the above, the lock screen needs an overhaul. A system which combines notifications and widgets would be best (so you can see, without unlocking your phone) how many emails, texts, and Twitter mentions you have as well as seeing what the weather is like in your area) but I think it's linked fairly intrinsically to the home screen system. It could be that the "Dashboard" I mention above is in fact the lock screen with home screens being unchanged but I think that would be a mistake - you can't add too much to the lock screen as the whole purpose is for it to have no touch input (incase it is activated by accident). Having a list of your notifications (so you can see if that *bing* was a notification of a baseball game starting or a new email) would be an excellent addition, but loading it up with touchable widgets would not be.

Updates

There are two kinds of updates available to iOS; app updates and system updates. At present, both suck and for pretty much the same reasons. The first major problem is incremental updating. If you want to update your device or an app, you have to download a whole new version of the software (which can be hundreds of megabytes in the case of system software and games) when there may only be a handful of lines of code changed. I don't think that 3rd party apps will get the ability to do incremental updates yet (as I imagine this to be hard to implement and check for Apple and developers) but there is no reason why iOS software can't be updated in this way - OS X has been doing it for years with full combo updates available to download when things go completely wrong. The other issue (for iOS updates at least) is that you need to be tethered to iTunes. This wasn't an issue for iPods as it was generally accepted that you need to be connected to iTunes to add music so doing updates through it is logical. However, iTunes is now a bloated mess, dealing with a multitude of media and updating. It is no longer suitable for updating things like phones and post-PC devices (the iPad). iOS devices need the ability to update themselves without being linked to a computer and iOS 5 is the time to make that happen (in fact it has already been discovered that this is on the cards thanks to an over-zealous copyeditor on iTunes). The update to iOS 5 will be done in the old fashioned way but I expect that updating to iOS 5.1 (or 5.0.1) will be able to be done independently from a PC (and hopefully over a 3G connection if incremental updates are issued).

To learn more about software updates on iOS, check out my post about iOS updates vs Android updates.

Other improvements

There are lots of other areas that could use improving but these are all fairly minor in comparison with the design overhauls above. Maps would do well to be updated as it pales in comparison to Android but this is largely in the hands of Google (see the problem there?) until Apple break away with their own mapping solution. I was hoping that would happen this time around but from the rumours flying around it would seem that we are going to be stuck with Google as the only built-in mapping solution for at least the next year. Game Center is another tool which needs a radical overhaul in order to make it relevant. Adding a gamification layer equivalent to Xbox Live seemed a good idea but Achievements are half-baked (you can't see a total gamerscore as per Xbox Live) and the interface is, well, horrible. Now that there are Universal apps, it would make sense for game progress to be syncable (although I'll come to this in the iCloud section below) as there is nothing more frustrating that having to play a game twice on two separate devices (or on one as if you do a restore on your phone you lose your app data). The file system is something which could do with a tweak as syncing pages documents to iOS devices is quite painful through iTunes - this is something more likely to be solved by iCloud but the actual process of accessing files on the device may well be changed. Finally, there are things like "read it later" which we already know are coming to Safari (I bet Instapaper are happy about that) as well as minor updates to each of the major apps bundled with iOS so that Steve can say "there are over 100 updates" or something similar.

On the whole, the main basis of iOS 5 should be about a fundamental rethink of how apps are accessed and used. Whilst apps and the home screen system were revolutionary, they are now 4 years old and showing their age - it's time for an update which allows for unobtrusive notifications, easy access to app information via widgets, and lightweight updates available everywhere. Getting FaceTime to work on 3G (as promised) would also be a nice touch seeing as Skype has been doing it for months...

Lion

I'm not going to say a huge amount about Lion as I think we've seen the majority of the biggest pieces in the numerous developer previews (which despite NDA have been demoed extensively on YouTube and Apple Rumour sites as well as on the official Apple website). There is obviously a huge UI overhaul with Mission Control and Launchpad as well as bring a number of other innovations from iOS (reverse scrolling, iOS scrollbars, new segmented controls, overhauled calendar interface, etc) but the most exciting things for me are Mail, Auto Save, Versions, and AirDrop.

Mail

The built-in Mail app has been lacking for a little while, especially now that competitors such as Sparrow are taking a lot of the limelight on the Mac App Store. With Lion, Mail is becoming more like the iPad version which for me is one of the nicest email clients around. Apple will claim "conversations" as a big step forward but the truth is that Gmail has been doing it for a very, very long time (10 years or so in fact It was actually 7 years as Gmail started up in 2004 - thanks to @mattydsmith for the correction). However, it is a welcome improvement. I for one haven't got on well with Sparrow (bit too buggy for me at the moment) so I'm looking forward to the new version of Mail just for a few of the most basic improvements like conversations.

Auto Save

Just like iOS apps, Mac apps will now be built to take advantage of "auto save" - basically, whenever you change something, it is saved automatically rather than when you press the floppy disk icon (maybe this is the death of that button at last?). To be fair, this has existed for a long time in the OS itself (e.g. when you are in System Settings, you never save anything apart from when you apply network settings - everything is saved as soon as you change a toggle) and apps have faked it with autosaves (even Office does that) but this is the first time that support is baked into the OS making it a lot more efficient and reliable. A minor feature, maybe, but one that I'm sure will be welcomed by anybody that has lost data due to power failure or human error.

Versions

Related in many ways to Auto Save, Versions is basically Time Machine for files. Every time you alter a file, a backup is stored away somewhere so you can go back in time and get that version you wished you hadn't overwritten by accident. This has happened to be from time to time (especially when I get overzealous with my copy-paste commands and overwrite something I didn't mean to but then do something to break the "undo" button) so this will be another welcome addition. To an extent, I have this already for most of my work material as I have the packrat addition for Dropbox (which means every single file in my Dropbox is versioned automatically) but it will be nice to have the feature when I'm not connected to the internet.

AirDrop

A feature that hasn't been mentioned much in the press is AirDrop, a way for you to share files easily between computers. Basically, with two computers near each other, opening up AirDrop will allow you to share files without any of the usual network hoops such as Bluetooth handshakes or passwords. Whilst this could be done already if you have setup file sharing, the point is that it works out of the box. The reason I'm excited about it is because I expect it to be compatible with iOS 5 so you can very easily share files between your Mac and iPhone / iPad without a) tethering via a cable or b) iTunes. Definitely something to watch out for.

In addition to the above, I would expect there to be a few new headline features announced tomorrow for Lion. iWork is well overdue for an update and I don't think it's a stretch of the imagination to expect iBooks to make its way to the desktop (or at least the iBooks store). Ideally I'd like to see iTunes completely rebuilt as separate apps with the Mac App Store becoming the place for Mac and iOS apps (maybe even Universal apps that run on all devices - e.g. download Twitter and it will work on iPhone, iPad, and Mac with one binary.. no reason it can't be done. I'm not saying iOS apps running on Mac OS X, just that the Mac version could be bundled so for one payment you get all 3 versions). iTunes should be an audio playback utility (and store) only with Quicktime taking over TV Shows and Movies and iBooks managing your ebooks. It astounds me that everything is still controlled from iTunes. Do I expect it to be updated tomorrow? Realistically, no. iTunes updates tend to come with iPod updates in September but I'd still hope that at some point (and Lion would be the best time to do it) that some parts of iTunes could be split out to other apps. This was one thing that iOS does very well (e.g. the iPod app doesn't control everything) so taking this "back to the mac" would make sense.

iCloud

This is the big unknown quantity. What is iCloud? Apple have made the unprecedented move of announcing the name (and the icon) ahead of the announcement although this was most likely to stop people wondering if the iPhone 4S (or iPhone 5) would be announced or not (hint: it won't be). Most bets are on iCloud being a storage locker for music so that you can stream your iTunes library but there are a lot better things that can be done. I've broken them down by type:

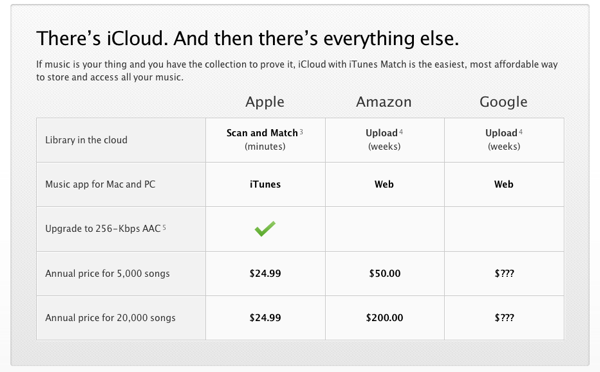

Music

Starting with music, it is generally accepted that iCloud is going to be heavily tied to iTunes. Music labels have apparently done deals with Apple so that streaming will be enabled but the real question is on implementation. Consensus appears to be that your iTunes purchases will be available to stream from Apple's servers to your iOS devices and Macs wherever you are. There would be no upload (as they have the master copies) and there is some advantage to this approach in that you don't need to have your iPhone synced with your 20GB library - you can just stream as and when you need it. The downside is a) you need to have purchased from iTunes (fair enough) and b) you need an internet connection. Apple tends to introduce new systems which are essentially crippled at launch and then build them up over time so I'd expect that the above is true and pretty much the extent of the music portion of iCloud. In future, we'll be able to upload our own MP3s to stream and cache things offline, etc, but for now it is most likely to be just previous iTunes purchases (although this is good news for me as I have many iTunes purchases which were lost due to a nonexistent backup strategy and now have to be re-bought as iTunes doesn't allow you to re-download music for free like it does with apps). I expect Apple to also launch a Spotify rival in the form of a monthly subscription to get streaming (and maybe offline caching) of iTunes content but this is more likely to come in September with new iPods and a new version of iTunes than at this announcement. I'll happily be wrong on that issue though as whilst I love Spotify, its library of music is nowhere near as comprehensive as Apple's.

Files

iDisk sucks. I don't know anybody who thinks otherwise. I also don't know anybody who uses a Mac who doesn't use Dropbox. This is a problem for Apple and one it needs to sort out fast. With their fancy new data centre and OS X Lion, there is no reason why they can't rule the online file storage space (especially if they tie it in with Versions and Time Machine along with fancy new routers to do the whole thing without your Mac being on). For this to work, there is one key requirement - uploading must be fast and easy (iDisk seems to take an age) and files need to be accessible everywhere (by which I mean, on iOS). That's pretty much it, nothing revolutionary. The keynote part of this will probably revolve around new Time Capsules and Airport Extremes which allow for your mac to sync incredibly quickly with their internal hard drives and then for the router to sync with the cloud whilst your computer is off. This is better for the environment and uses less resources on the Mac (as Dropbox hogs all memory and CPU cycles if you have a lot of updates to do) but I expect it to be an optional extra - obviously you'll be able to use file sharing on iCloud without an Apple router, it'll just be better if you have one.

Apps

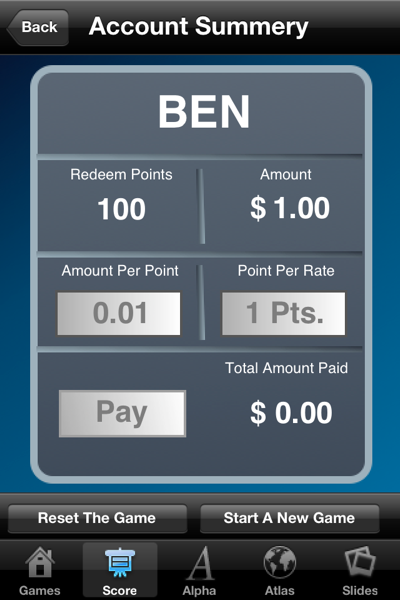

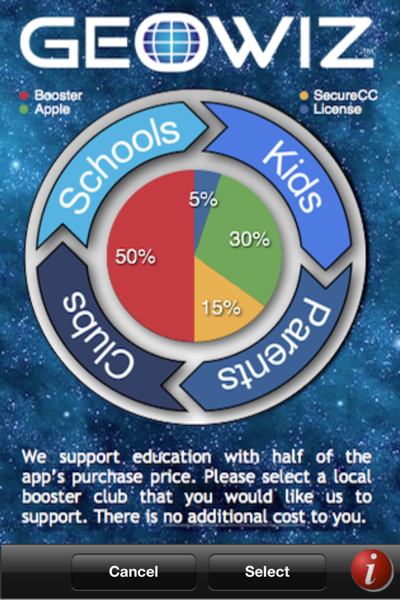

The best thing that iCloud could do would be to open itself up as a 3rd party API. This would allow app developers who don't have access to servers and web technology (which is a lot of them bearing in mind most come from software development backgrounds, not web development like me) to sync their data between their own apps with minimal hassle. A good example of this is Things, a to-do list app by Cultured Code. It's a fantastic app but its main failing is that there is not yet a public way for you to sync content over the internet between iOS apps (e.g. iPad and iPhone) or to the Mac (e.g. iPhone to Mac or Mac to Mac). It can be done with WiFi and they are working on cloud-sync solutions, but with a 3rd party API this would have been done already. Another good example is games like Angry Birds in which you lose your progress if you wipe your iPhone or switch to your iPad (or upgrade from an iPhone 3GS to an iPhone 4). With a 3rd party API, developers could sync game save data to the cloud ready for it be downloaded on all of your devices so you never again lose your high scores. Developers win as their apps become more intuitive and cohesive and consumers win as they now have an ecosystem for their devices which maintains their data without them thinking about it. If anything, I would say that this is the biggest potential area for iCloud and I really hope to see this announced tomorrow.

MobileMe

The final area of iCloud is the takeover of MobileMe. I think it's fairly safe to say that MobileMe won't exist after tomorrow and will be absorbed by iCloud. However, there are updates that need to happen. At £59 a year, it's overpriced for a system which most people only use to sync their address books and calendars. Free email has been around for years (with Gmail having a superior offering), Flickr is better than the photo syncing and Dropbox is better than iDisk - the only thing worth having (aside from contact / iCal sync) is Find My iPhone which was recently made free for new iOS devices anyway. I won't speculate on pricing for iCloud (as we don't know what it is yet) but I'd expect that the syncing aspects will be free for anyone with OS X Lion or iOS devices in future (and just a nominal fee, around the $30 mark, for everyone else). The cost advantage of having syncing between all devices will net Apple more profit (just from apps and devices) than charging for that syncing service.

One More Thing...

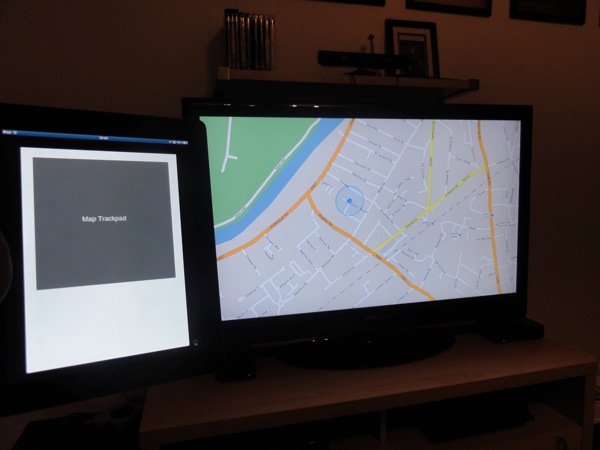

There is one other thing that I've been predicting for a while and I'm hopeful we'll see a push in the right direction tomorrow; Apple TV Apps. When I say apps, I don't mean native apps on the Apple TV. That won't work for the simple reason that the Apple TV only comes bundled with a basic remote which provides very little control for things more advanced than the YouTube app that comes bundled (and that's bad enough when it comes to text entry). No, what I mean is that iOS 5 apps on iPhone and iPad should be allowed to use the Apple TV as a secondary screen. APIs exist for this (in a way) with a cable in that an iPad-to-VGA adapter can let you display your apps on a secondary monitor (e.g. using the Keynote app you can display your presentation on a projector and notes on your iPad) and the iPad 2 introduced mirroring mode allowing you to play games at HD resolution on your TV with the component cable. However, I want to be able to play something like Real Racing wirelessly with my iPhone providing the app and acting as a wireless steering wheel, yet transmitting the data to the TV so that the Apple TV can display the game. There are issues to solve with lag (especially in HD gaming) but these could be solved by having an app payload sent over to the TV and cached locally as part of an initial load (kind of like buffering a movie) and then the remote only sending light data packets with control information. As an example, use the Apple Remote app with an Apple TV and you'll find it highly responsive - you can scroll and tap on the iPhone to control the Apple TV and it all works in real time. Just imagine if you could transmit your Keynote presentation wirelessly and then control it in the same way or play Angry Birds on the big screen. Throw in a built-in version of Game Center and suddenly Apple will be up against the entrenched console systems in the living room - you wouldn't even need to change the name AirPlay as the "play" could be about gaming rather than just AV playback. I've been talking about this ever since the iPad was launched but I think now is the time it could become a reality - it's a long shot but we'll see tomorrow!

Summary

In conclusion, tomorrow could be one of the many defining moments in Apple's history. If iOS 5 gets enough of a redesign to keep it fresh when compared to the newcomers, if OS X Lion launches soon with more upgrades than those previewed, and if iCloud introduces true syncing between them, then it will set the tone for Apple for the next 5 years. However, if iCloud is anything like the MobileMe launch (e.g. a shambles), then things will turn out very differently.

I'll be tweeting any major announcements as they happen and I'll chuck up a summary post tomorrow to see how many of my predictions have come true. In the meantime, if you have any opinions, fire me a message on Twitter or drop me an email.